I was recently asked by an education policy student to explain my comment, “Quantitative research is covered-over qualitative research.” A good question!

When I was an experimental psychology student at Johns Hopkins University, I was intrigued by a professor’s derivations of equations. While few of us fully understood the statistics, we were impressed by our professor’s enthusiasm for mathematical theory and the complexity and mystery of the symbols that filled the blackboard.

When I reentered academia as a research professor, I soon realized that quantitative research findings are perceived as the gold standard because they are reported in numbers, algebraic symbols, and equations and, therefore, are considered more objective than qualitative findings. The mathematical terms serve as a seal of approval for the validity of the studies and their independence from researchers’ value judgments. Like the statistical derivations I encountered in my experimental psychology classes, the mathematical symbols are difficult to understand and, therefore, difficult to question or critique.

Qualitative research findings, in contrast, typically result from interviews, focus groups, or ethnographic studies and are presented as verbal analyses of key themes. The findings are generally more comprehensible to readers, but less prestigious in academia. They are, therefore, sometimes enveloped in theory, as if to strengthen their academic credentials.

No perfect method

Often, what appear to be irrefutable equations in quantitative research or erudite theories in qualitative research serve as analytic “covers” that mask the strengths and weaknesses of the research on which the analyses are based. Neither analytic method ensures the validity of the research or its immunity from subjectivity. The study’s methodological strengths and weaknesses are determined when researchers frame questions, choose samples and outcome measures, and collect data. These design decisions and their implementation are far more important determinants of research validity than are the subsequent data analyses, whether quantitative or qualitative. They are also the decisions where researcher subjectivity and bias are most likely to undermine the objectivity of the findings.

That is what I meant when I described quantitative research as covered-over qualitative research to my student. Supposedly objective measures in quantitative studies are still built on decisions researchers make, and these decisions are just as subject to bias as any case study or interview data that a more qualitative researcher might collect.

Why this matters

The belief that quantitative measures are more objective than qualitative measures matters when the findings are highly publicized and, therefore, have a direct impact on public policy decisions. International test score comparisons are examples of how a quantitative cover can mask intrinsic design flaws. These comparisons are considered objective because they use regression analysis and its associated models and equations. What often goes unnoticed, however, is that the layers of equations and assumptions are built on subjective choices about sampling, outcome measures, and other basic design decisions. The statistics mask, but do not eliminate, the subjectivity of these choices.

The choices vary from country to country and influence the test score rankings in different, and often unknown, ways. Countries make different choices, for instance, about how to educate students with disabilities, students in apprenticeship programs, high-poverty students, language-

minority students, or migrant students. They also make different choices about which schools and students to include in the studies and about whether, and how, to account for children who are out of school and, therefore, not tested and represented in the comparisons.

The volumes of technical details and statistical symbols describing the analyses cannot compensate for the reality that the comparisons are among independent countries with widely varying gross domestic products, poverty rates, school retention rates, tracking practices, and public/private enrollment rates. The elaborate statistics cannot make up for the pervasive and inevitable sampling differences, the non-comparability of the data, or the resulting irrelevance of the findings to policy decisions. The statistics simply provide a cover.

We might prefer large- or small-scale studies. But whichever method we use, the terms quantitative and qualitative are meaningless in distinguishing between valid and invalid studies. Neither the equations of quantitative research nor the theories of qualitative research ensure objectivity. Both are influenced by value judgments about how to define the variables and what to include or exclude. Those research design decisions, not their analytic covers, determine the studies’ validity and relevance to public policy.

This article appears in the March 2024 issue of Kappan, Vol. 105, No. 6, p. 64-65.

ABOUT THE AUTHOR

Iris C. Rotberg

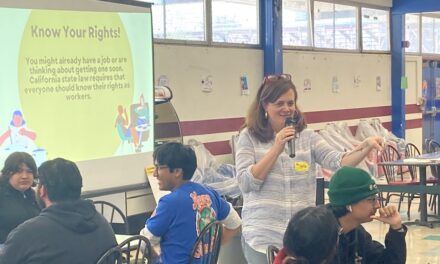

Iris C. Rotberg is a research professor of education policy at the Graduate School of Education and Human Development at The George Washington University, Washington, DC. She is the editor, with Joshua L. Glazer, of Choosing Charters: Better Schools or More Segregation?