Moving teacher evaluation systems from measuring teachers’ performance to improving their practice requires much greater attention to communication and support.

“Exactly what I needed.”

That’s how Principal Ramirez described the new teacher evaluation system her district was piloting. With training and support on using her district’s framework for evaluating teacher performance, Ramirez became accustomed to using evidence to rate her teachers’ practice and was enthusiastic about the system’s potential for improving their instruction. (All names in this article are pseudonyms.)

“Stifling.”

That’s how Ramirez’s teachers described the postobservation conferences that were part of the new teacher evaluation system. While teachers praised her leadership in other areas and saw immense potential in the new evaluation process, they said her approach to conferences — reciting questions directly from observation forms provided by the district and reading evidence collected during her observations — was too scripted to encourage conversations that could improve instructional practice. Ramirez acknowledged that her approach was not ideal. “I imagine I’ll get better at this,” she said.

How she will get better typifies the challenge facing districts as they revamp teacher evaluation systems with the dual goals of providing better measures of teacher performance and improving teacher practice. Communication and training are crucial to achieving these goals. In our work in Chicago and other Illinois school districts, we have seen districts’ initial communication and training efforts largely focused on rating teacher practice. This initial focus is appropriate and necessary because reliable ratings promote trust in the evaluation system.

Districts have provided substantial training on rating teacher practice, but they’ve paid less attention to the communication, training, and support required to promote instructional improvement.

But reliable ratings alone won’t improve teacher practice. A corresponding investment in communication about the system and its component parts and training on using postobservation conferences for teacher development will be needed. Although districts have provided substantial training on rating teacher practice, the districts we studied have typically paid less attention to the communication, training, and support required to grow this practice. As Ramirez’s teachers would attest, understanding the observation framework is just a starting point for conversations about instruction. Teachers and those who evaluate them — often school administrators — need training and support to understand the system and its goals and to move beyond reviewing ratings and evidence to engage in deep discussions that promote instructional improvement.

In fall 2012, Chicago Public Schools (CPS) instituted a sweeping reform of its teacher evaluation system when it introduced REACH Students (Recognizing Educators Advancing CHicago’s Students), replacing a 1970s-era checklist rubric and for the first time including a detailed classroom observation process and student growth measures into teacher evaluations. Researchers at the University of Chicago Consortium on Chicago School Research have investigated teacher evaluation reforms in Chicago and other districts in Illinois from 2008 to the present (Sartain, Stoelinga, & Brown, 2011; White, Cowhy, Stevens, & Sporte, 2012; Sporte et al., 2013). This article draws on that research to present examples of the communication and support some districts have provided for rating teacher practice, as well as areas where communication and support are crucial for moving from solely measuring teacher practice to improving it.

Communication and training on rating teacher practice is a vital precursor to improving instruction.

It makes sense that districts’ initial communication and training efforts have focused on improving administrators’ abilities to reliably rate classroom instruction. Rubric-based classroom observation protocols (such as the modified version of Charlotte Danielson’s Framework for Teaching used in Chicago) allow administrators to provide more detailed and specific ratings of classroom instruction. This increased specificity requires training to ensure ratings are based on evidence and are aligned to what the district has determined constitutes high-quality instruction.

Many districts appear to do this well. Across Illinois, potential evaluators are now required to use an online training tool that includes videos, practice ratings, and quizzes. The training culminates in a certification assessment that administrators must pass before they may rate classroom instruction for evaluation purposes. Some districts have gone beyond this required training to include additional practice with aligning evidence and assigning ratings in workshops throughout the school year. Chicago Public Schools hired and trained specialists to serve as calibrators. These specialists conducted classroom observations alongside school administrators and then compared their own ratings and accompanying evidence to that of the evaluating principal or assistant principal.

The training efforts appear to have paid off in Chicago, where three-quarters of the administrators we surveyed felt prepared to rate teachers using the observation framework. They rated their understanding of the framework as strong or very strong. In a survey administered at the same time to a sample of teachers, 72% of teachers who had received ratings said their ratings were the same as or higher than they thought they should have been.

These early promising results in Chicago don’t suggest that districts can train their administrators at the beginning of implementation and be done with it. There will always be new administrators who need training; in addition, continual training will be needed to prevent problems such as rater drift — where an administrator unintentionally redefines scoring criteria over time (Harik et al., 2009).

Teachers need to understand how the system will be used for evaluation and professional growth.

In addition to training administrators to rate teacher practice, districts must actively communicate to teachers about the new system, its goals, and intended uses. As teacher evaluation ratings are increasingly used in personnel decisions, clear communication can underscore the importance of the formative purpose of the new systems and help build trust and buy-in. In the absence of such communication, misunderstandings about the system’s goals can stymie its potential for promoting growth. For example, a belief that the evaluation system is intended “to weed out teachers who aren’t doing their job” (as we heard in interviews in Chicago) may undermine improvement efforts. Teachers may be reluctant to openly expose their instructional weaknesses if they fear this puts them at risk of receiving a poor rating. It is easy to see how these teachers may engage in the system differently than the teacher who told us, “REACH is a series of rubrics that you can use to improve your teaching practices.”

Similarly, teachers need an understanding of how different components of the evaluation system, such as observation ratings, standardized tests and other student assessments, factor into their final rating. In Chicago, there was limited communication about this calculation, and many teachers were confused about it. One teacher explained, “I am concerned about my effort as a teacher completely relying on the test scores of my students.” Yet, in the initial year of implementation, student test score growth accounted for no more than 25% of a teacher’s evaluation score. As with the concerns over personnel decisions, misinformation about the weight given to various components could distract teachers from viewing the system as a tool for improvement.

The districts we studied used a combination of strategies to communicate details of the new systems to teachers. Several districts made information available to teachers through optional districtwide training sessions and district-maintained web sites. Some also relied on school principals to provide information to teachers. Principals’ workloads and differing capacities made this reliance problematic, and information relayed to teachers varied widely across schools. None of these strategies was particularly successful at ensuring that all teachers had a thorough understanding of the new systems.

Districts that mandated training for teachers had more success. For example, in one downstate district, the central office sent informational emails to teachers and required them to attend presentations in addition to trainings provided by individual principals. As one teacher described it, “[the district] wanted every teacher to get the exact same message about it, and they did an outstanding job on this.” The mandatory nature of the trainings signaled to teachers that this initiative was a high priority and that having teachers understand the system was important to the district.

All participants need to understand the classroom observation rubric.

As we noted earlier, evaluators need to clearly understand the observation rubric in order to make accurate ratings. But teachers also need training about this rubric for it to serve as the basis for instructional improvement. In all of our studies, we found that participants welcomed the new specificity — even if they didn’t fully understand the framework. For example, in our most recent work in Chicago, we found that fewer than half of teachers reported having a strong or very strong understanding of the framework. In spite of this lack of concrete understanding, surveys and interviews provided ample evidence that practitioners welcomed the framework’s clear expectations and definitions. According to one teacher, “The observation rubric describes what really good teaching looks like. It gives me a clear description of what teaching looks like at each level.” Interviews with practitioners in the earlier pilot and in downstate Illinois showed similar enthusiasm.

In addition to clear expectations about strong instructional practice, the framework provides a common language for talking about teaching, an important foundation for good postobservation conversations. Both administrators and teachers found that having such a common language resulted in conferences that were more reflective and more focused on improvement. When comparing the framework to the previous system, one teacher explained, “the framework gave me some direction . . . I know what they’re looking for . . . I felt like the conversations I had in my conferences were really helpful.”

Coaching is a skill: Supporting productive conversations between teachers and administrators is crucial to improving teacher practice.

As we heard multiple times: Having clear expectations is an improvement over the old evaluation process. Yet, as we saw in the opening example of Ramirez and her teachers, there is a lot of room for improving these conferences — where both teachers and administrators can grow. The common language of the framework and evidence-based ratings are a great foundation, but they do not automatically result in the kind of reflective and constructive dialogue that supports instructional improvement. Such conversations rely not only on participants understanding the framework but on having them effectively engaging in the conference process.

Principal Andrews, a participant in Chicago’s pilot, had many such conversations with teachers. Her postobservation conferences were marked by healthy debate over ratings and evidence and by dynamic discussions focused on instructional improvement. Nearly all her teachers felt that they improved their practice by using the framework, and most identified the conferencing process as a critical aspect of that change.

Quality conversations that enable all participants to grow depend on both sides coming to the table knowing the framework and how to use it in a collaborative, constructive dialogue.

In the same study, we saw stronger principals engaged in conversations in which teachers could explain their viewpoints, discuss improvement strategies, and, in some cases, challenge the principal’s interpretation of the instructional practice. While some principals, like Andrews, may already have skills in this crucial area, many, such as Ramirez, would greatly benefit from support.

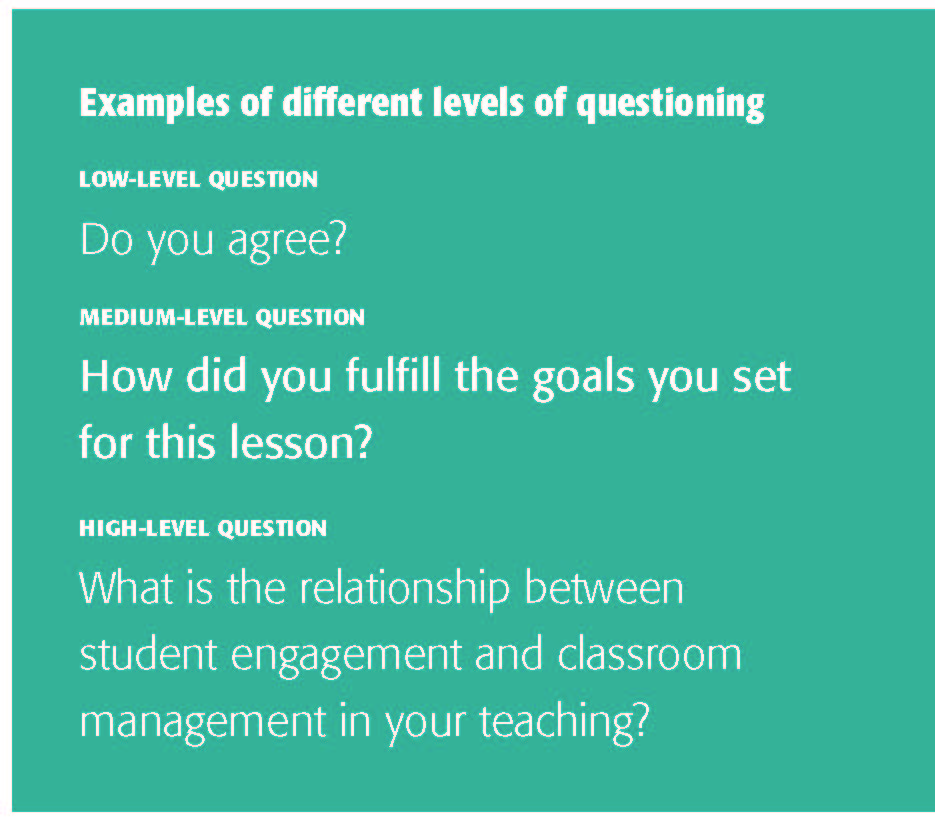

She is not alone. During the pilot, we found that principals dominated postobservation conversations, accounting for 75% of the questions and comments. In addition, most of their questions required little reflection or explanation from teachers. Only 10% of principals’ questions required extensive teacher response and reflection about instructional practice. On the other hand, 65% were low-level questions, which required limited teacher response. Overall, about half of the principals in the sample asked predominantly low- and medium-level questions, while the other half asked mostly medium- and high-level questions (see box for examples of question levels).

Conducting productive conversations that are focused on improvement is an important skill, and administrators need more support in this area. In the districts we studied, professional development for administrators focused more on rating teaching practice than on conducting postobservation conferences.

Administrators are aware that they need such support. In our recent survey, 80% of CPS administrators said professional development in coaching teachers to improve their practice was the highest priority. District specialists hired to support principals in rating their teachers accurately found that many principals wanted help conducting conferences with teachers. Some principals felt anxious about difficult conversations with lower-performing teachers, while others were concerned about how to support the growth of all their teachers.

The complexity of knowing what is appropriate to spur growth for each individual teacher underscores the need for training. One principal expressed her frustration in this way: “There’s 15 things they need to get better at, and so 15 of them are important. Where do I begin?” Another focused on the opposite problem: “An area that I still struggle with is . . . when somebody’s doing something wonderfully, how to be a good thought partner with making it better.” Districts and principal training programs can help develop the skills to hold effective conferences; such skills include analyzing and sharing data collected through classroom observations and building and maintaining trust even when broaching sensitive topics (New Teacher Center, 2012).

Not only administrators need support. In our study of the pilot, principals pointed out that their level of questioning and amount of direction they gave depended on the degree to which they believed each teacher was capable of self-assessment. Many teachers don’t have experience engaging in this kind of conversation with their administrator. As a CPS teacher explained:

The postconference was good. It was a little bit challenging for me though because she asked me first how I thought I did. And I didn’t want to tell her. ‘You tell me how I do!’ It was a two-way conversation, and that’s the first time I’ve had an observation with an administrator like that. Yes, this was much different than my observations in the past.

Quality conversations that enable all participants to grow depend on both sides coming to the table knowing the framework and how to use it in a collaborative, constructive dialogue.

What it will take to measure up

Districts are reforming their teacher evaluation systems with an eye toward better measuring and improving teacher practice. In order to fully realize this opportunity to grow the capacity of teachers and administrators, districts must expand their focus beyond obtaining accurate ratings. They must dedicate time and resources to communication and training in three additional key areas. First, communication must make explicit that evaluation and growth are system goals, and all participants need a clear and accurate understanding of how teachers will be evaluated. This is necessary to establish the openness and trust required for growth. Next, teachers and evaluators must have a strong understanding of the common language provided by the observation framework. Finally, teachers and administrators can benefit from supportive training on how to engage in productive conference conversations. The challenge for districts going forward will be to keep administrators’ rating skills honed, while providing teachers and administrators the communication and support they both want and need.

References

Harik, P., Clauser, B.E., Grabovsky, I., Nungester, R.J., Swanson, D., & Nandakumar, R. (2009). An examination of rater drift within a generalizability theory framework. Journal of Educational Measurement, 46 (1), 43-58.

New Teacher Center. (2012). Supervision to build mentor experience. Santa Cruz, CA: Author.

Sartain, L., Stoelinga, S.R., & Brown, E.R. (2011). Rethinking teacher evaluation in Chicago. Chicago, IL: Consortium on Chicago School Research.

Sporte, S.E., Stevens, W.D., Healey, K., Jiang, J., & Hart, H. (2013). Teacher evaluation in practice: Implementing in Chicago’s REACH students. Chicago, IL: Consortium on Chicago School Research.

White, B.R., Cowhy, J., Stevens, W.D., & Sporte, S.E. (2012). Designing and implementing the next generation of teacher evaluation systems: Lessons learned from case studies in five Illinois districts. Chicago, IL: Consortium on Chicago School Research.

Citation: Hart, H., Healey, K., & Sporte, S.E. (2014). Measuring up. Phi Delta Kappan, 95 (8), 62-66.

ABOUT THE AUTHORS

Holly Hart

HOLLY HART is a senior research analyst at the University of Chicago Consortium on Chicago School Research, Chicago, Ill.

Kaleen Healey

KALEEN HEALEY is a senior research analyst at the University of Chicago Consortium on Chicago School Research, Chicago, Ill.

Susan E. Sporte

SUSAN E. SPORTE is director for research operations at the University of Chicago Consortium on Chicago School Research, Chicago, Ill.