The flexibility of the charter school law in New Mexico allowed one school to design a growth and evaluation plan that helped teachers and students.

How can we create an evaluation process that inspires teachers to develop throughout their careers, seek feedback from peers and students, and collect accurate data about student learning? Committed to being a charter school with a professional learning community that empowers teachers, South Valley Academy (SVA) staff transformed its state evaluation process into a practitioner action research process (Anderson, Herr, & Nihlen, 2007). While teachers self-diagnose growth needs and play active roles in improving their own practice, SVA’s new model holds teachers accountable for addressing student performance challenges and requires classroom-generated data for measuring student learning as a result of teaching practices. Using artifacts as evidence collected by teachers and an action-research timeline built into the school calendar that allows for collaboration and feedback, the rigorous evaluation process both nurtures and measures teaching effectiveness.

A new PD plan template

New Mexico requires teachers to submit annual professional development plans (PDPs) that include improvement objectives, action steps, and measures of desired results. For most SVA teachers, PDPs were little more than an administrative hurdle — filling out paperwork, undergoing random observations, and signing off on goal completion. Administrators judged whether the teacher was meeting expectations and included optional brief comments.

While the state evaluation plan asks teachers to set an objective related to one of nine teacher competencies, none of SVA’s 2008-09 PDPs targeted actual student performance. To shift from setting an instructional practice goal to a measurable student-learning goal, SVA staff experimented with revising the state form to plan individual practitioner action research projects (Figure 1). The narrative asks for a goal with a measurable student outcome. The rationale tells the story behind the goal — how the teacher identified the learning gaps or skill deficits, and the action steps describe the problem-solving process. The instructions expand the list of possible observers and suggest a variety of resources to use and evidence to collect throughout the year.

To illustrate how the new PDP process works, we tell the story of Andres Plaza, a beginning teacher who decided to focus on vocabulary instruction in his chemistry class. In his rationale, he described the frustration students experience with chemistry vocabulary:

Stoichiometry, huh? Titration, what? Sodium hypochlorite, who? Chemistry is more than just learning about the interactions of matter. Chemistry is like learning another language, a language that codes for complex interactions and physical phenomenon. And, if chemistry is studied without an understanding and use of this other language, it is as if you are speaking Spanish to a Brazilian. It doesn’t make much sense. Thus, when I reflect about how I can enhance learning in chemistry, I remember that I am teaching more than a content area; I am teaching a new language.

Plaza’s goal was for 80% of his students to learn the necessary vocabulary to understand every content-related, targeted skill; the previous year, only 58% of his students learned all the content vocabulary necessary to demonstrate such proficiency in lab reports.

Our measure of teaching effectiveness: a teacher’s ability to identify a student-performance challenge, to collect evidence that systematically addresses this challenge, and to adjust instruction based on the evidence.

Plaza brainstormed with colleagues to learn how they develop vocabulary, book titles about building vocabulary, and who to observe teaching vocabulary. His PDP action steps included trying a variety of strategies: slide presentations, hands-on activities, two-column notes, flashcards, word walls, art posters, and games (Around the World, Mile a Minute). Plaza made students aware of his PDP goal and involved students both in monitoring their own progress toward this goal and providing input into the classroom.

For Plaza, the PDP process gives his teaching rigor and visibility:

It forces me to use data and classroom observations to identify learning gaps or skill deficits, which, in turn, helps me focus on finding resources to learn about and improve my instructional strategies. Perhaps most important, the process gives me a chance to show off what I am learning, the cool or new things I am trying in my classroom as a result of that learning, and the learning gains based on the new strategies. I have an opportunity to show that I’m not a master at teaching everything, but I know how to identify learning deficits, I know how . . . to address them, and I am going to take a risk and try some new things I might not be comfortable with in order to improve my teaching practice. [T]his . . . allows me the space to define my own plan by which I will be evaluated. To me, this is fair and empowering. A negative evaluation would be because I didn’t follow my own plan, not because I didn’t follow a plan that someone else laid out for me.

Developing a PDP rubric

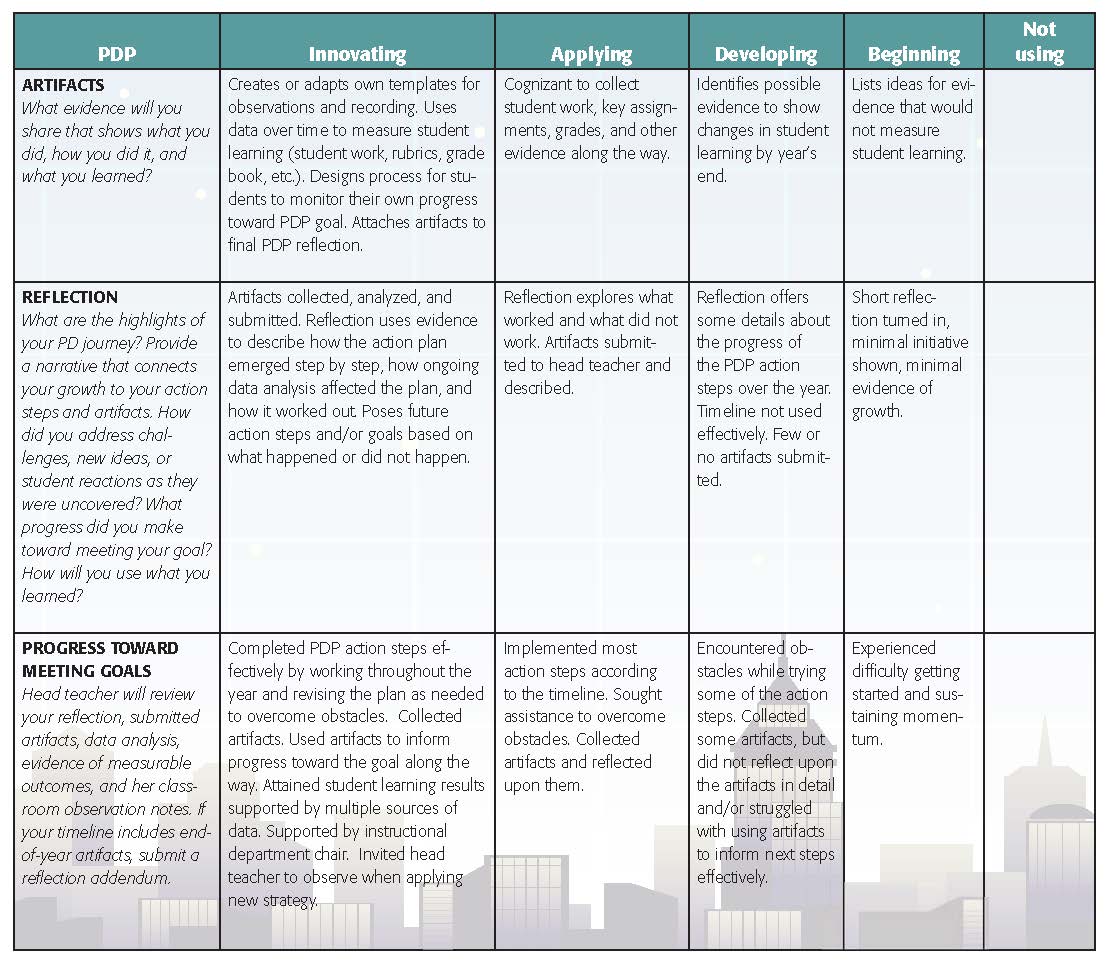

To help teachers more clearly understand the new PDP process, we developed a rubric with extensive staff input to describe quality indicators for each narrative component (Figure 2). The rubric uses language adopted from Robert Marzano’s (2011) measurement ratings for effective teaching — innovating, applying, developing, beginning, and not using. By including the innovating category, we encourage risk taking and resourcefulness rather than safe goal setting.

Familiarity with the rubric required careful introduction to wording and assessment language that is more growth-oriented than accountability-oriented. Teachers needed examples to distinguish innovating from applying; to clarify risk taking and who is taking the risk; to understand how rationales require “thoughtful reasons” and “creative strategies”; to realize that manageable action steps depend on licensure level and personal/professional circumstances.

Familiarity with the rubric required careful introduction to wording and assessment language that is more growth-oriented than accountability-oriented. Teachers needed examples to distinguish innovating from applying; to clarify risk taking and who is taking the risk; to understand how rationales require “thoughtful reasons” and “creative strategies”; to realize that manageable action steps depend on licensure level and personal/professional circumstances.

SVA’s schedule includes weekly time for full-staff meetings, department meetings, grade-level meetings, ed-teaming, and individual or group work on PDPs. The PDP timeline includes six weeks at the beginning of the year to receive guidance and non-evaluative peer feedback on initial PDP drafts. Teachers then revise their PDPs and use the rubric to self-assess before submitting their PDPs to the head teacher. After reviewing and assessing the PDPs with department chairs, the head teacher passes on written feedback and meets individually with teachers who need revisions or who are self-assessing higher than the head teacher’s rubric scores.

At the beginning of the year, the head teacher only assesses the first five components on the rubric — the goal, rationale, measurable student outcomes, actions/timeline, and resources. After these components are approved, our PDP schedule reserves seven early-release afternoons for individual and group work — time to get help, to design data-collection instruments, to review student work and data, and to use data for lesson planning. Teachers are encouraged to revise PDPs as their practitioner action research projects evolve and can resubmit them for re-evaluation. Plaza resubmitted his PDP to raise his goal score from developing to innovating when peers helped him identify specific strategies for vocabulary instruction.

At the end of the year, the head teacher assesses the last three components of the rubric — artifacts (evidence), reflection, and progress toward meeting goals. Good end-of-the-year reflections show the growth journey — what influenced our thinking, decision points during the year, how each action step informed the next one, lessons learned, and aha moments. Final reflections include data, data analysis and interpretation, and genuine next steps for the upcoming year. While typical final reflections in 2008-09 were no longer than two paragraphs, current reflections average five, single-spaced pages with six to 12 artifacts attached. Teachers have become more invested in the PDP process.

Skill development

Meaningfulness comes with time as teachers like Plaza develop the skills to plan manageable and relevant PDPs that improve teaching and learning. Plaza’s final reflection included data showing the distribution of vocabulary mastery scores for each content area, an analysis of every vocabulary strategy implemented, and 14 artifacts that included sample slide presentations, word walls, art posters, lab reports, and tests. By tracking targeted skill scores for each content area, Plaza saw which skills students mastered with a high rate and those with which students struggled. His final reflection touched on his growth through the PDP experience:

My PDP for the 2011-12 school year was the most challenging in my three years at South Valley Academy. [T]his year I focused on vocabulary instruction, something I never really had to worry about in my own education as well as a topic I have little formal training on how to instruct. At the end of this year of experimentation, I may not have skillfully executed the instructional techniques I was trying to learn, but I learned some big lessons about effective teaching strategies and even bigger global lessons about how students learn and acquire vocabulary. I look forward to next year when I can continue to focus on vocabulary instruction and a data-based approach to improving those skills with which students struggled.

The head teacher evaluates each PDP and writes extensive feedback to each teacher. When evaluating progress toward goals, the difference between effective teachers (Applying) and excellent teachers (Innovating) becomes evident when seeing how they deal with obstacles, how they use input from others, how they observe and listen to students, and how they adjust instruction in response to data. So when evaluating the final component of the rubric, Progress Toward Meeting Goal, the head teacher doesn’t assess whether teachers met their goals, but whether they made adequate progress toward them.

How can schools create an evaluation process that inspires teachers to develop throughout their careers, seek feedback from peers and students, and collect accurate data about student learning?

When reviewing Plaza’s final reflection, the head teacher noted that he didn’t meet his PDP goal. However, the 66% mastery rate for every targeted content skill earned him a progress score of Innovating because he took a risk to raise mastery expectations, his artifacts collected throughout the school year, his thorough analysis of the effectiveness of the new vocabulary strategies, and his instructional flexibility in response to data. Plaza’s evaluation score shows both accountability and growth, and illustrates our measure of teaching effectiveness: a teacher’s ability to identify a student-performance challenge, to collect evidence that systematically addresses this challenge, and to adjust instruction based on the evidence.

Building a culture of feedback and improvement

Teachers initially struggled to write a professional growth goal related to student learning, so we keep in mind one question: What effect will this instructional change have on students’ learning? Measurement can be stated in several ways: by percentage of overall improvement, by targeting improvement compared to established starting points, or by improvement over last year’s results. After several rounds of feedback on goals and measurements, teachers seek professional resources for student learning challenges and to develop action plans. The school gives each teacher a $300 discretionary professional development budget, and teachers can request more support.

For evidence from the past to develop PDP goals and evidence from the current year to demonstrate progress, teachers need help thinking about what artifacts to collect. (See Figure 1.) To support teachers collecting evidence, we build in professional development time for reviewing examples of classroom data collection instruments that track and display learning progress. We look at qualitative and unobtrusive data — student work, observations, videotaping, student self-evaluations — that could provide a growth comparison, and we practice how to aggregate such data to see what patterns emerge.

SVA prioritizes PDP work, reserving time during normal school hours to put teachers in the best position possible to succeed on their PDP, which adds fairness to the evaluative component. The PDP work timeline includes sharing and analyzing artifacts with peers and for arranging head teacher and colleague observations when teachers implement a PDP strategy. Often, nonevaluative observations require multiple short classroom visits followed by a conversation with the teacher. How effectively teachers seek, document, and apply observers’ input affects their final evaluation for Progress Toward Making Goals.

Initially, a practitioner action research specialist, Shelley Roberts, modeled for the head teacher what to look for in a PDP — alignment between the goal and measurable outcome, the measurable outcome and artifacts, the artifacts and action steps. Department chairs help narrow goals, identify effective strategies, design methods for assessing their effect on student learning, determine how department meetings can support PDP work, and clarify when classroom observations and examining student work would be most useful. Teachers continue to request support for identifying strategies, collecting artifacts, and analyzing data. Over three years, artifacts scores for teachers who rated applying and innovating increased from 65% to 95%.

Effect on staff culture

How did the new PDP model affect staff culture? Staff meetings shifted from dealing mostly with logistics about school life to prioritizing planning and implementing practitioner action research projects. The professional learning community changed as well. Rather than participating in study groups focused on a common topic, teachers collaborate in cross-department support groups to review PDPs and share artifacts. Thinking aloud forces teachers to reflect on the scope of their goals, how clearly the words capture their intent, how to integrate data collection into teaching routines, and what the evidence shows. Knowing that others’ eyes are with teachers rather than on teachers, the culture of observation grows.

The isolation associated with PDPs ended; PDPs became more authentic. With practitioner action research as the professional touchstone, teachers eagerly talk about classroom challenges. Receiving feedback on PDP narratives lowers anxiety, produces quality revisions, and puts into place clear and manageable action steps. Peers often offer different ways of seeing students and interpreting data — collective creativity (Louis & Kruse, 1995). Plaza gained invaluable insight from his peers:

These collaborations are perhaps the most powerful and useful part of the PDP process. Staff became aware of the many learning gaps . . . and we began working collaboratively to share and find ways to address those learning gaps. Many times, other staff members noticed things that were happening that I hadn’t noticed before . . . . [T]eachers together can start noticing trends, and then the project is even more meaningful. The PDP process has organically led some of us to addressing skill gaps that might not just be content specific, but to overall academic skill deficits. Having a forum to share effective strategies for student learning opens the door for every teacher to help every other teacher get better. When other people start doing new things because of other people’s PDPs, this makes the process even more powerful.

All these changes lead to courageous conversations about teaching practices, steering teachers away from blaming low performance on students. Teachers look more closely for patterns of student learning and misunderstandings. We push each other’s thinking, offering complementary and opposing viewpoints, which adds excitement and encouragement to the PDP process.

PDPs are no longer just an administrative hurdle. Rubric scores hold teachers accountable for their goals, but the head teacher’s accompanying comments boost morale by affirming the risks and accomplishments, suggesting possible next steps, and assuming that teachers do best when people know what they are doing. Her final summary to the staff about PDPs pushes teachers to excel and stimulates SVA’s culture of teachers as learners, teachers as experts — a professional learning community that encourages teachers to tackle more rigorous improvement goals and honors them as self-directed learners with knowledge to share (Mielke & Frontier, 2012).

Changes lead to courageous conversations about teaching practices, steering teachers away from blaming low performance on students.

Having a rubric to guide and measure progress encountered some initial confusion and resistance, but reviewing and revising the rubric together clarified expectations. When displaying aggregated rubric scores for several years, staff saw growth in all areas of designing and implementing a PDP. Over three years, Progress Toward Meeting Goals scores for teachers who were Applying and Innovating increased from 70% to 95%, thus affirming that SVA’s new evaluation process can improve teacher practice, increase student learning using classroom-generated data, and develop skills required for New Mexico’s licensure dossiers and National Board certification portfolios.

Action research as a measure of effectiveness

Does SVA’s new PDP process identify teaching strengths and weaknesses? Grouping the progress data by licensure levels (1-3) showed that Level 3 teachers initially struggled more than teachers at Levels 1 and 2 with making adequate progress toward their professional development goals. This disparity continued into the second year, suggesting that teacher preparation programs were preparing new teachers to conduct research about their classrooms and schools (Cochran-Smith & Power, 2010). Targeted interventions and individual conferences with the head teacher helped veteran teachers overcome ingrained habits from the former evaluation model, and the collaborative process modeled the new expectations. For some, PDP goal setting became a remediation strategy.

New Mexico outlines a process for evaluating staff with different licensure levels. As teachers advance in their careers, progressive documentation is required about nine competencies related to instruction, student learning, and professional learning. Through SVA’s PDP process, teachers and the head teacher naturally build evidence the state requires, aligning evaluations with statewide standards for teaching that are related to meaningful student learning (Darling-Hammond, 2012). If a teacher shows little promise of growth in meeting student-learning challenges over several years with much mentoring, the principal and head teacher review the teacher’s overall performance — institutional evidence of growth in students’ academic skills and habits, professional innovation and initiative, advising, committee work, as well as contributions to the school community. The PDP process was a good barometer for quality effort and work, and the school did not renew several staff contracts after much remediation.

Having experimented with the PDP process for four years, SVA learned that its practitioner action research evaluation model invests time and money in an evaluation system that helps teachers improve through a culture of feedback (Danielson, 2010/2011). While nurturing teacher performance, SVA can document yearly progress systematically with diverse evidence from multiple sources. With a fair and transparent process, SVA can assess teacher quality effectively by using evidence of student learning generated in classrooms.

References

Anderson, G., Herr, K., & Nihlen, A. (2007). Studying your own school: An educator’s guide to practitioner action research (2nd ed.). Thousand Oaks, CA: Corwin Press.

Cochran-Smith, M. & Power, C. (2010). New directions for teacher preparation. Educational Leadership, 67 (8), 7-13.

Danielson, C. (2010/December-2011/January). Evaluations that help teachers learn. Educational Leadership, 68 (4), 35-39.

Darling-Hammond, L. (2012). The right start: Creating a strong foundation for the teaching career. Phi Delta Kappan, 94 (3), 8-13.

Louis, K.S. & Kruse, S.D. (1995). Professionalism and community: Perspectives on reforming urban schools. Thousand Oaks, CA: Corwin.

Marzano, R.J. (2011). Effective supervision: Supporting the art and science of teaching. Alexandria, VA: ASCD.

Mielke, P. & Frontier, T. (2012). Keeping improvement in mind. Educational Leadership, 70 (3), 10-13.

CITATION: Radoslovich, J., Roberts, S., Plaza, A. (2014). Charter school innovations: A teacher growth model. Phi Delta Kappan, 95 (5), 40-46.

ABOUT THE AUTHORS

Andres Plaza

ANDRES PLAZA is a science instructor at South Valley Academy.

Julie Radoslovich

JULIE RADOSLOVICH is head teacher and a language arts instructor at South Valley Academy, Albuquerque, N.M.

Shelley Roberts

SHELLEY ROBERTS is a practitioner action research instructor at the University of New Mexico and an educational mentor at South Valley Academy.