A free cost-utility analysis tool can help school leaders make evidence-based decisions about how to spend their money.

A few years ago, we visited the budget director at a school district our university research team collaborates with, hoping to convince him that the decision-making framework we were developing with some of his colleagues would help him make good investment decisions for his schools. It was budget season, so he was barricaded behind giant screens and mounds of documents. Despite our sunniest greeting, he didn’t seem pleased to see us.

He pointed to a stack of binders piled high in the corner of his office. “See those?” he griped. “Inside are two to three pages on each of the hundreds of programs and contracts that I am asked to fund every year. I have no idea which ones actually help the kids do better. Every year, new things are added to the list, and no one ever takes anything away. So how do I decide what to fund? That’s what I need help with. If you can solve that problem, let’s talk. If not, go away.”

We could understand his irritation because we hear this problem from school and district leaders all the time. Making evidence-based decisions in schools seems impossible when there are hundreds of activities to evaluate, little to no research evidence about most of them, and immovable budget deadlines to meet. Everyone wants a program that “works,” but working can mean different things to different stakeholders. It could mean, for example, improving test scores or grades, promoting positive behavior, fostering creativity, or any other number of other outcomes. Which ones are most important? Never mind that improving outcomes for students is but one consideration for the “what works” question. Which tools, programs, strategies, and interventions will fit with the school’s mission? Earn buy-in from the teachers? Invoke the ire of parents? Find favor with a board member? Unnecessarily duplicate programs already in place? How can time-strapped decision makers conquer this overwhelming challenge?

A decision-making framework

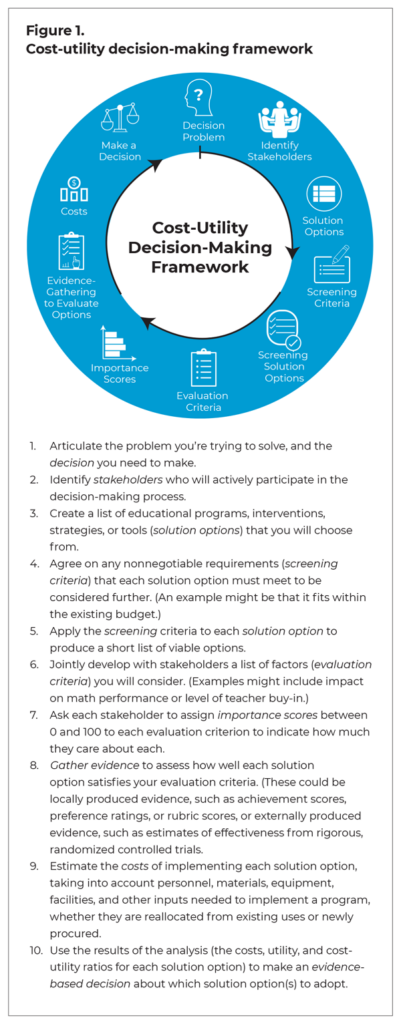

Working with schools, districts, and current and aspiring school leaders, we developed a 10-step decision-making framework to tackle these kinds of complex decisions (Hollands, Pan, & Escueta, 2019). It relies on cost-utility analysis, which is widely used to inform decision making in other fields, especially health care (Robinson, 1993), to determine what programs will have the highest return on investment (ROI) — in other words, what programs will be most worth the cost. Cost-utility analysis evaluates programs by addressing two main questions: What resources are required to implement the programs, and how useful or satisfying is each program to stakeholders? The first part — cost — is usually easier to address because, with adequate information about resource requirements, school and district leaders generally have a good handle on whether they have the staff and budget to implement a program. The second part — utility — is what our framework tackles.

We’ve guided education decision makers to assess a program’s utility by a process based on Ward Edwards’ multi-attribute utility theory (Edwards & Newman, 1982), which involves articulating a problem, identifying possible solutions, determining what stakeholders care about, gathering evidence to score each solution option against these criteria, and ranking the options by cost, utility, or cost per unit of utility. The solution option with the lowest cost-utility ratio yields the highest ROI because it costs the least for each unit of utility or “stakeholder satisfaction.” In short, cost-utility analysis is a systematic, evidence-based approach to getting the most bang for your buck. Figure 1 illustrates the 10 steps of the framework.

The free online DecisionMaker tool (www.decisionmakertool.org) can help leaders move through the process and calculate a utility score for each option (Hollands et al., 2019). The tool prompts users to describe the problem they are trying to solve, list the solutions they will consider, select criteria for evaluating the options, weight the criteria according to importance, gather information to score each option against the criteria, and input the results of their evidence-gathering and an estimate of the cost to implement each solution option. The DecisionMaker will calculate an overall utility score for each solution option as well as scores for each evaluation criterion. It also generates a printable report that users can share with stakeholders.

While there are several other frameworks that can help leaders identify and articulate their schools’ needs (e.g., Data Wise or the Plan-Do-Study-Act cycle), they do not necessarily help determine which potential solution option is the best fit for addressing the problem. DecisionMaker is unique in that it helps leaders make that determination. Additionally, it enables leaders to quantify the value of different programs using a variety of criteria simultaneously, as opposed to focusing on only one criterion, such as impact on student outcomes.

While there are several other frameworks that can help leaders identify and articulate their schools’ needs (e.g., Data Wise or the Plan-Do-Study-Act cycle), they do not necessarily help determine which potential solution option is the best fit for addressing the problem. DecisionMaker is unique in that it helps leaders make that determination. Additionally, it enables leaders to quantify the value of different programs using a variety of criteria simultaneously, as opposed to focusing on only one criterion, such as impact on student outcomes.

Four key aspects of this decision-making framework make it especially valuable. First, we all know that decisions are not made based purely on rational considerations (Bonabeau, 2003). The framework allows leaders to simultaneously consider both the objective and subjective factors influencing a decision. Second, it meaningfully involves stakeholders in the decision-making process while still allowing the lead decision maker to determine whose voices count the most. For example, when choosing a social-emotional learning curriculum, a decision maker might want to consider student preferences but give teachers greater weight in the decision. Third, the framework provides transparency and accountability to ensure that the decision-making processes are consistent and fair. Finally, the framework meets the requirement under the Every Student Succeeds Act that schools and districts invest in evidence-based educational activities, strategies, and interventions.

Like any decision-making process, this framework may involve moving through some steps multiple times before reaching a final conclusion. Exactly what the process looks like will vary, depending on the situation. Let’s see the framework in action by reviewing how two leaders recently used it to inform their decisions.

Supporting teachers during COVID-19

Mara is the director of curriculum and instruction in a small school district. Last summer, one of her top concerns was how best to help her teachers “create learning experiences that transition seamlessly from physical to virtual” during the COVID-19 pandemic. She brought together principals and a few teachers to discuss possible professional support solutions that would fit the district’s existing professional development budget, be deliverable by existing staff within the current school schedule, and have a high likelihood of teacher participation. To find ideas, the team consulted nearby districts and reviewed a few studies and reports from Best Evidence Encyclopedia, the Education Resources Information Center (ERIC), and the Institute of Education Sciences. They settled on four strategies to evaluate more thoroughly:

- Elect lead teachers at each grade and give them a platform to share best practices each week.

- Meet weekly in a professional learning community (PLC) on distance learning.

- Videotape short lesson snippets each week and meet to review and discuss the snippets monthly.

- Create targeted mini-professional development (PD) sessions to deliver before or after school.

This brought the team through the first five steps of the process, but now came the really hard task — choosing which strategy to adopt. For steps 6 and 7, the team agreed on six evaluation criteria to apply to each of the solution options and decided that the most important criterion that each solution option had to meet was being “user-friendly” to maximize teacher participation. The other criteria involved teacher workload, required resources, potential pedagogical improvement, teachers’ ability to implement, and technical support requirements.

Now it was time to begin gathering relevant evidence to make a comprehensive assessment of how well the four options met each of the six criteria. For the most important criterion, user-friendliness, Mara wrote brief descriptions of how each professional support strategy would work and asked her district’s teachers to rate each one on user-friendliness using a scale of 0-10. Next, she consulted with experienced colleagues to estimate how many hours per week each option would add to teachers’ workloads. Third, she counted how many new resources would be needed to implement each strategy. Fourth, she scored each solution option against a rubric designed to assess how well a PD solution might improve teacher pedagogical skills. Fifth, she reviewed teachers’ current scores on instructional delivery skills to assess their capacity to implement the option and rated the likelihood of success on a scale of 0-4. Finally, she estimated the number of hours of technical support that she and other administrators would need per week to implement each option.

With all this evidence in hand, Mara entered the scores each solution option earned on each of the six evaluation criteria into the online DecisionMaker tool and estimated the cost per teacher of each option. She reviewed the results with her colleagues. As luck would have it, the choice was fairly straightforward. The solution option that earned the highest utility value, the PLC option, was also the least costly to implement.

Not much else went well in 2020, but Mara was able to pick a solution that would provide the greatest stakeholder satisfaction and the highest ROI. When summing up the rationale behind her decision, she said, “The PLC is the most cost-effective and will be the easiest to execute. It also has the added benefit of leading to increased teacher efficacy. It uses less of the administrators’ time, and it utilizes structures already in place.”

Choosing digital learning tools to improve reading performance

Sam is a new assistant principal at Summit High School, which is in one of the largest school districts in the country. Approximately one-third of Summit’s students are current or former English learners (ELs), and most students arrive at Summit reading below grade level. The school allocated funds to address literacy during the 2020-21 school year, and Sam was given the job of determining how to use those funds. He conferred with English language arts teachers in his own and other district schools and set a goal for Summit to improve average student Lexile scores by 150 points.

The framework allows leaders to simultaneously consider both the objective and subjective factors influencing a decision.

Sam’s colleagues recommended eight possible solutions to improve student reading performance. To narrow this list down to a more manageable number to evaluate, Sam identified and applied six screening criteria. He was not going to consider any option unless (1) he could find existing evidence of effectiveness; (2) it could be purchased via the district’s online purchasing system; (3) it could fit within the current school schedule; (4) it met his content requirements and learning objectives; (5) it was appropriate for high school students and ELs specifically, and (6) given the uncertainties about virtual vs. in-person instruction, it was available in digital format. These screening criteria effectively whittled the choices down to a short list of three commercially produced digital tools.

To assess how well each of these three options might work for Summit, Sam and his colleagues evaluated them against two evaluation criteria: (1) evidence that the tool would improve students’ Lexile scores and (2) the availability of Spanish-language supports. They considered the former evaluation criterion twice as important as the latter.

To gather evidence about Lexile score improvements, Sam searched research repositories for empirical, peer-reviewed evaluations of each tool and noted the Lexile change observed for students in those studies. It wasn’t easy for him to find high-quality comparable studies that all measured Lexile scores, and none of the options appeared to yield improvements that were quite as high as his target, so he had to reset his expectations. On the other hand, a close review of the materials on the vendors’ websites showed that two of the tools earned a perfect score on the rubric he and the English language arts teachers developed to assess the quality of Spanish-language supports.

Like Mara, Sam used DecisionMaker to process the scores he collected for each of the three solution options. He also provided total costs of each digital tool for 625 students. He reviewed the summary metrics — costs, utility, and cost per unit of utility — with the school leadership team. Sam’s results were less straightforward than Mara’s because no single tool stood out as the best on all measures. The tool that yielded the best ROI was relatively inexpensive, but it only earned a fair utility rating. The tool that earned the highest utility rating was more expensive, but still affordable. The team settled on adopting both of these digital tools because it seemed well worth the expense to buy a little extra stakeholder satisfaction.

Can this framework help you with your big decisions?

If you have a big decision to make that involves choosing among potential solutions, the cost-utility decision-making framework can help you clearly articulate a problem and possible solutions, authentically engage stakeholders, and utilize evidence to make systematic and transparent decisions. However, you still need to do the work of gathering data to illustrate the problem, identifying solution options, and collecting solid evidence to evaluate the options against your evaluation criteria. The reward for all this work should be stakeholders who are happy with the choices you make as a leader — or who at least feel heard. Of course, the true long-term test of the framework and of your decisions is whether student outcomes improve.

The examples we’ve shared here show how DecisionMaker can be used to facilitate choices between several alternative educational strategies, programs, or tools, but it can also be useful for other kinds of decisions, such as determining which budget items to continue funding, how much to scale up a promising strategy or program, or which candidate to hire from among a number of potential candidates. This framework is most useful for major decisions with multiple potential solutions from which to choose, but it is less useful when there aren’t multiple options to consider or when decision makers cannot afford the time and effort for evidence gathering. In addition, while DecisionMaker boils usefulness of programs down to numbers for ease of comparison, some things are hard to capture numerically. It’s always advisable to present the results of a quantitative analysis alongside some qualitative information, such as a summary of the pros and cons of each solution option.

The Resources & Guidance pages on the DecisionMaker website provide links to repositories of research evidence where you might find ideas for solution options and evidence about these programs’ effects on students. We also created the Relevance and Credibility Indices (https://capproject.org/rici), which are rubrics designed to help decision makers evaluate existing studies of educational programs to determine how seriously to take their findings and how well the findings might apply in their own context. All of these resources can be useful when gathering evidence to evaluate your options.

We designed DecisionMaker with busy school and district leaders in mind, and to date, people from 14 countries and 30 U.S. states and the District of Columbia have registered to use the free tool. And our friend, the budget director, has since become his district’s chief financial officer, while we have continued to work with program officers in his district office to apply the framework to their decisions about which reading resources to use in their turnaround schools and which programs and practices to purchase with Title I funds. Our hope is that more leaders like him will find this tool a useful way to sort through the mounds of information about what they can do to arrive at a decision that makes sense for their district and, most of all, their district’s students.

References

Bonabeau, E. (2003). Don’t trust your gut. Harvard Business Review, 81 (5), 116-123.

Edwards, W. & Newman, J.R. (1982). Multiattribute evaluation. Sage Publications.

Hollands, F., Pan, Y. & Escueta, M. (2019). What is the potential for applying cost-utility analysis to facilitate evidence-based decision-making in schools? Educational Researcher, 48 (5), 287-295.

Hollands, F.M., Pan, Y., Levin, H.M., Corter, J., Escueta, M., Menon, A., Muroga, A., Kushner, A. & Kazi, A. (2019). DecisionMaker. Teachers College, Columbia University. www.decisionmakertool.org

Robinson, R. (1993). Cost-utility analysis. British Medical Journal, 307 (6908), 859-862.

ABOUT THE AUTHORS

Anna Kushner

ANNA KUSHNER is a researcher and doctoral student studying education policy at Teachers College, Columbia University.

Fiona Hollands

FIONA HOLLANDS is a senior researcher in the Department of Education Policy & Social Analysis at Teachers College, Columbia University.