Student cheating is nothing new. What sets it apart in the AI age, and how should educators respond?

At a Glance

- Teachers have expressed concern about how many students are using artificial intelligence (AI) to complete their assignments.

- Data shows that students are using AI more, but the overall amount of cheating remains about the same as it has been in the past.

- Certain uses of AI may be considered cheating by some teachers and considered acceptable by others.

- Discussing AI use with students and colleagues can improve AI literacy, create consistency in AI use policies, and promote behaviors that enhance learning rather than replacing it.

Whenever educators discuss artificial intelligence (AI) in schools, someone calls out the elephant in the room: cheating. Since the November 2022 release of ChatGPT, educators everywhere have worried that students would use this new technology to write their papers and complete their assignments for them. Now, a couple of years into this AI revolution, we have a growing number of generative AI chatbots (like ChatGPT, Gemini, and Claude), some alarming news headlines about cheating with AI, and a lot of personal anecdotes of students using AI as their tool to cheat for school. Every time we have facilitated professional development or met with schools, someone asks how we can keep this from happening. Is there a good AI detector they can use? Should they go back to paper and pencil writing?

The “cheating” elephant has been in the room long before AI entered and made it a hot topic. Long-standing research with large numbers of students from around the world has found that upwards of 80% of high school students engage in some cheating behavior (Conner, Galloway, & Pope, 2009; Josephson Institute, 2012; Wangaard & Stephens, 2011). And surprisingly, in a study looking at the same schools before and after the introduction of ChatGPT, the cheating numbers have stayed the same (Lee et al., 2024).

The “cheating” elephant has been in the room long before AI entered and made it a hot topic.

So how are we supposed to understand the relationship between AI and cheating? Based on the research, we recommend understanding cheating as something that has long been a persistent and rather large challenge for schools to address. AI is not opening the floodgates of cheating where it had never previously existed. Rather, it represents a change in methods for a long-standing practice.

Cheating: Past and present

A growing database managed by Challenge Success, a Stanford University-affiliated K-12 nonprofit that helps schools redesign their school climates that covers more than 350,000 high school students, supports this idea. The group’s survey includes questions about specific behaviors associated with cheating, such as copying from another student’s paper or using an unauthorized device during an assessment, worded carefully to ask about behaviors without judgment and without using the term “cheating.”

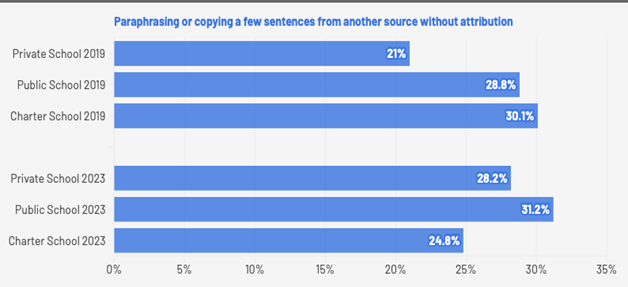

The results (Figure 1) show, consistent with previous years, that high school students in 2019 were paraphrasing and copying a few sentences from other sources without attribution — what we tend to label as plagiarism. When asked if they had engaged in this behavior in the past month, 21% to 30.1% of students reported doing so. AI chatbots were not as popular or capable then. In 2023, after AI had captured everyone’s attention, the numbers look similar with 24.8% to 31.2% of students reporting this behavior. The slight difference in numbers is the equivalent of what researchers would call “noise.”

Source: Challenge Success survey data

Why, then, is there so much alarm about cheating and AI? One reason is that AI may feel different from other technology tools students used for cheating, such as calculators or smartphones, because AI can almost immediately produce unique text and other outputs for each student query.

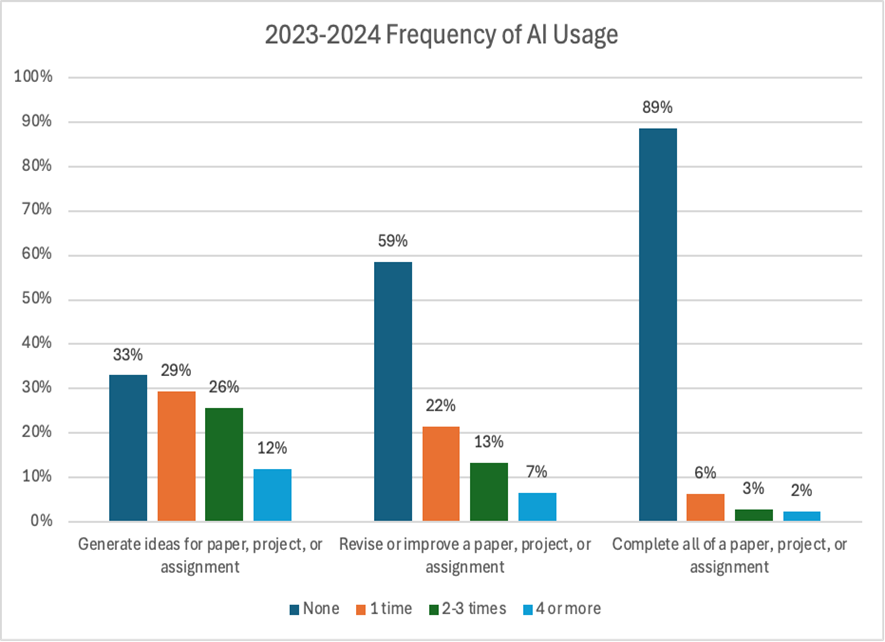

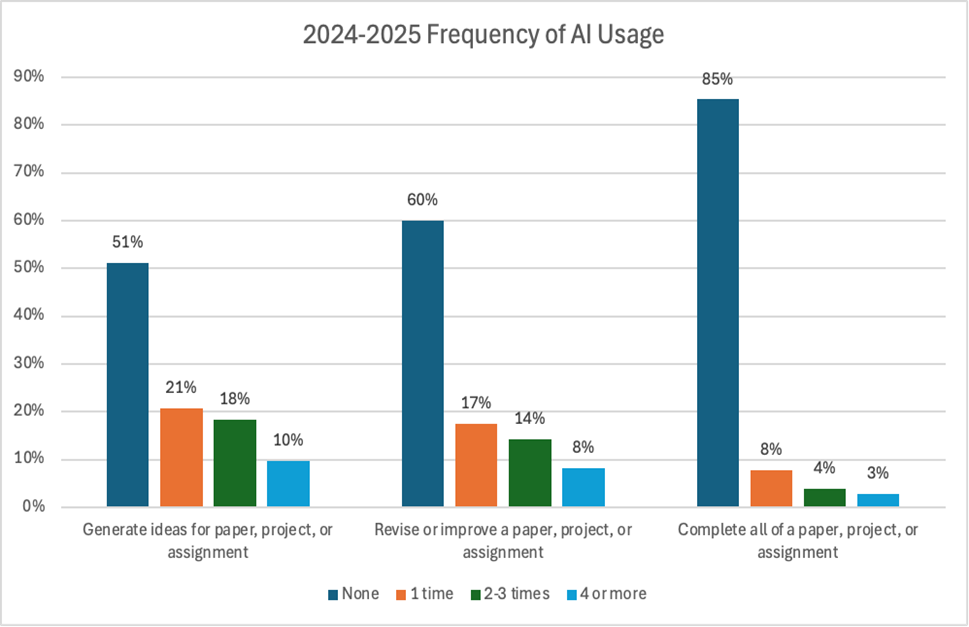

Teachers fear that they are now spending time reading and providing feedback on papers that are wholly written by AI. However, Challenge Success data from the last two school years (2023-24, 2024-25) suggest that most students treat wholesale completion of a paper to be a step too far. Nine out of 10 students do not report doing this. Still, having 1 out of 10 papers be fully AI-generated is a lot when you’re working with 30 to 150 students.

Research suggests that similar numbers of students were presenting copied papers long before AI hit the scene. They may have used online homework services that sell papers or found an entrepreneurial classmate who made extra cash by selling completed papers and projects. This may sound familiar from our own days as students. This approach is so common that it often shows up in television and movies about high schools. Wholesale outsourcing of schoolwork is a clear problem, whether or not AI is available.

The blurry definition of cheating

What is more complicated in the AI era is that almost half of students are using AI in some way to help with their schoolwork. For instance, in the 2024-25 school year, 49% of students reported on our Challenge Success survey that they used AI to generate ideas for their schoolwork. Forty percent used AI to revise or improve a paper, project, or assignment. For students and teachers, it is not always clear if these behaviors count as cheating (Mah et al., 2024). Students use AI to check their spelling and grammar, and many educators do not consider that cheating (and perhaps even expect students to do that before submitting their work). On the other hand, if students use AI to come up with some initial ideas and then they develop those ideas into interesting, high-quality products, educators may not agree about whether that was OK.

To see how complicated this can get, consider this story that a high school student shared when we asked how they used AI:

I use it to check answers on math a lot. So I’ll do a problem and then after I finish doing the problem, I’ll check it with AI, and if I didn’t get it right, I’ll try to think about the solution on my own. But if I’m really lost, then I’ll just look at what it did for the solution and try to understand from that.

As educators, do we feel that this is an acceptable use? Some teachers might be fine or even support this use, while others might feel this crosses an ethical line, particularly if the homework is being graded. Given the lack of consensus, it is no surprise that teachers in the same school or even the same department are coming up with very different policies about AI use. Students complain that policies are either unclear, nonexistent, or sometimes even contradictory.

Many students also report cases where they or a classmate had been accused of using AI when the student insists that they had not. At one high school, a student shared with us:

I know of a lot of people whose work got flagged for AI even when they didn’t use it at all. And this has happened with essays and [also] computer science assignments just because they studied ahead of the coursework, and the teachers were suspicious and [the students] got written up.

This is a major frustration point for students. It also raises serious questions about fairness because students who are still developing their English language proficiency, such as emerging multilingual students and English learners, produce writing that is disproportionately flagged as AI-generated (Liang et al., 2023).

We have even heard of cases where some students who feel they are middling writers were incorrectly accused of using AI – and then decided to resort to using AI to modify their work so they wouldn’t be accused of using AI! If students are engaging in such behaviors, we need to ask whether fear of AI use in schools is adding undue stress and worry to students and adults alike.

Strategies for navigating AI in your school

Based on our research and our work with educators and students around the globe, we offer the following strategies to help foster ethical and productive AI use in schools:

Listen to students

Students have a lot to say about AI, but often they see AI as a taboo topic to discuss with teachers. The data so far suggests that, while students have complicated perspectives about certain use cases, they have a sense of which behaviors are not acceptable.

Learning more about students’ views on acceptable and unacceptable AI use can help lead to policies that respond to both student and teacher concerns. When forming or revising AI policies in schools, we encourage educators to work together with students to clearly define what does or does not count as cheating behavior and to allow for student input around AI detection, consequences, and policy communication and enforcement.

Enhance AI literacy

AI literacy includes knowing what AI can and cannot do well and whether to use it for certain tasks. Do students know about AI hallucinations and misinformation and how some AI tools are designed to encourage students to keep using AI even if they do not need to? Are they aware of the privacy and energy use concerns that persist with commercial AI products?

An ongoing concern is that when students use AI, they are not thinking critically or learning the material. Some research studies suggest that higher AI literacy is associated with decreased AI usage (Tully et al., 2025). This may be because understanding better what AI can and cannot do leads to more measured and thoughtful use. Resources and lessons are becoming available to help teachers integrate AI literacy into classroom instruction. For example, Stanford University has developed the CRAFT (Classroom-Ready Resources About AI For Teaching, http://craft.stanford.edu) repository that hosts dozens of lessons co-developed between teachers and university researchers to explore how AI is related to different school subject areas. Lesson materials for AI literacy are also available through groups like Common Sense Media (http://commonsense.org).

Collaborate with colleagues

Students we surveyed expressed frustration that, across their school day, what they’re allowed to do with AI changes in ways that seem arbitrary to them. Even within teaching teams or departments, perspectives on AI can vary. Students taking the same course with different teachers may encounter different policies, creating inconsistency.

As a professional community, convene students, teachers, administrators, and others to devise policies and activities that are consistent and provide meaningful learning for students. Think about why different policies and concerns might apply to different types of activities. For example, knowing that AI is prone to giving confident-sounding erroneous information is a different set of issues than concerns about intellectual property rights. Think carefully about how potential classroom uses relate to each of these concerns.

Remind students that the goal is learning

Human learning requires work. Struggle, persistence, and mistakes are necessary parts of learning. It may be that what is important to learn needs to change now that AI can make some tasks quicker (Ginsberg & Zhao, 2025). However, there are always important activities we must learn to do that require us to put in the work.

Talk with your students about why they want to use AI. Are they using it to cut corners due to overload, worry about grades, not understanding the material, or difficulty finding help? It can be difficult to resist a quick fix when you feel unsupported, unwell, or unmotivated. When adults and students feel connected, supported, and engaged in the learning process, behaviors traditionally considered cheating tend to go down (Stephens & Gehlbach, 2007).

Openly discussing AI use, creating consistent and effective policies, and strengthening student-teacher relationships can mitigate some of the stress and worry around AI use and lead to greater learning overall.

References

Conner, J., Galloway, M., & Pope, D. (2009). Student integrity in high-performing secondary schools [Conference presentation]. Annual Meeting of the American Educational Research Association (AERA), San Diego, CA.

Ginsberg, R. & Zhao, Y. (2025). Reconsidering literacy in an AI world. Phi Delta Kappan, 106 (7-8), 44-47.

Josephson Institute. (2012). 2012 Report card on the ethics of American youth resource document. Lee, V.R., Pope, D., Miles, S., & Zárate, R.C. (2024). Cheating in the age of generative AI: A high school survey study of cheating behaviors before and after the release of ChatGPT. Computers and Education: Artificial Intelligence, 7, 100253.

Liang, W., Yuksekgonul, M., Mao, Y., Wu, E., & Zou, J. (2023). GPT detectors are biased against non-native English writers. Patterns, 4 (7).

Mah, C., Walker, H., Phalen, L., Levine, S., Beck, S., & Pittman, J. (2024). Beyond cheatbots: Examining tensions in teachers’ and students’ perceptions of cheating and learning with ChatGPT. Education Sciences, 14 (5), 500.

Stephens, J.M., & Gehlbach, H. (2007). Under pressure and underengaged: Motivational profiles and academic cheating in high school. In E.M. Anderman & T.B. Murdock (Eds.), Psychology of academic cheating (pp. 107-134). Academic Press.

Tully, S.M., Longoni, C., & Appel, G. (2025). Lower artificial intelligence literacy predicts greater AI receptivity. Journal of Marketing, 89 (5), 1-20.

Wangaard, D.B. & Stephens, J.M. (2011). Academic integrity: A critical challenge for schools. Winter: Excellence & Ethics, 2011.

Acknowledgements

This article was made possible through the support of Grant 63355 from the John Templeton Foundation. The opinions expressed in this publication are those of the authors and do not necessarily reflect the views of the John Templeton Foundation.

This article appears in the Winter 2025 issue of Kappan, Vol. 107, No. 3-4.

ABOUT THE AUTHORS

Victor R. Lee

Victor R. Lee is an associate professor at the Stanford University Graduate School of Education and the faculty director of the AI+Education initiative at the Stanford Accelerator for Learning in Stanford, California. He is the author of Advancing Data Science Education in K-12: Foundations, Research, and Innovations (Routledge, 2025).

Denise Clark Pope

Denise Clark Pope is a senior lecturer at the Stanford University Graduate School of Education and co-founder/strategic adviser at Challenge Success, both in Stanford, California. She is the author of Doing School: How We Are Creating a Generation of Stressed Out, Materialistic, and Miseducated Students (Yale University Press, 2023) and co-author of Overloaded and Underprepared: Strategies for Stronger Schools and Healthy, Successful Kids (John Wiley and Sons, 2015).

Sarah Miles

Sarah Miles is the director of research at Challenge Success. She is a co-author of Overloaded and Underprepared: Strategies for Stronger Schools and Healthy, Successful Kids (John Wiley and Sons, 2015).